Hundreds of billions of dollars are being poured into developing and running frontier AI models.

For everyone who cares about society, we must ask ourselves - to what end?

I believe that AI can drive civilization-scale progress for all of humanity but that this needs us to go beyond raw model intelligence.

Model intelligence is powerful and exciting - but if we care about progress, it's not enough.

Economic history clearly shows that breakthrough technologies - like steam power, electricity and personal computing - are unrealized in their full potential at the start and often require new enabling infrastructure.

My thesis is that civilization-scale progress from AI also requires new infrastructure - and ultimately a complete reinvention of how we understand 'work' and 'companies'.

In particular, I believe that this must revolve around a new agent-native tissue - a type of infrastructure that ultimately collapses many of the artificial silos and boundaries which today's knowledge workers operate within.

This is a working draft, which we'd love to discuss with you if you're serious about agent-native infrastructure or civilization-scale progress. Please reach out to richard@withnative.ai

The Post-Intelligence Bottleneck

We have normalized what is incredible

One of the evolutionary superpowers of humans is that we are incredibly adaptive.

The flip side of this is that we can take things for granted, because we normalize them.

Every day, we turn on the bathroom lights, brush our teeth and step in the shower. It's pretty mundane.

But this is just one example how our lives are built on the marvels of modern electricity, medicine and plumbing. These things are ordinary to us, but would be truly astonishing to our ancestors.

AI is fast becoming one of those things. Today, we can share a photo with a machine and ask it to write us a poem about it. Within moments, you'll have something better than most humans could do in an hour.

It's more than entertainment. Today, frontier AI models can solve complex coding problems in a world-class level, contribute at the frontier of scientific research, and substantially outperform medical students in assessments.

Just a few years ago, this would have been unthinkable for most of us. Now, with strong open-source models performing comparably, intelligence is becoming a simple utility.

We need more than model intelligence

For ambitious companies doing important innovation, the bottleneck used to be talent, skills and work ethic.

Now, anyone can work with world-class intelligence that produces output 100x faster and 100x cheaper than the most capable humans.

The companies building the foundational model layer are scooping up capital at an ever-increasing rate - investing tens and hundreds of billions into making these models even more powerful, fast and cheap.

It's truly awe-inspiring and jaw-dropping work.

It's also important for us to remember - intelligence is not the end goal.

We already have a technology with a theoretical 100x multiplier potential in both velocity and efficiency. (Crudely, we could even consider that as an overall 10,000x multiplier on productivity - but for this essay we will consider it as a 'mere' 100x.)

It's a civilization-scale delta that is already in our hands with today's models.

Why are we not seeing a civilization-scale impact?

The reality outside of the tech bubble

Those of us in the tech bubble really "feel the AI".

Most people don't feel it in the same way.

- 80% of executives say they've seen no impact on employment or productivity in the past 3 years from AI

- On average, workers think it saves less than 2% of their time.

For those of us immersed in tech, who've "felt the AI" and are ambitious for the world, this is a problem worthy of obsession. What's stopping this 100x technology from having a 100x impact?

It's tempting to say that this is simply an adoption gap. People need to use AI more, or use it better.

If we challenge ourselves to think bigger and bolder, I think we can find a bottleneck that's much deeper and more profound than simply 'adoption'.

The bottleneck is bigger than adoption

To understand why the bottleneck is not adoption, we'll run a thought experiment.

In 2025, about two-thirds of US GDP came from the Fortune 500 companies, with about $20 trillion in combined annual revenue.

Now, let's imagine a fictitious Fortune 500 scale enterprise, 'Generic Services Inc' (GSI). GSI is a global provider of knowledge work services (e.g. software development, legal services or management consulting) - exactly the type of work which today's frontier models already excel at.

In this fictitious, optimistic and somewhat magical scenario, GSI has a clear strategy set by the CEO to go 'all in' on AI-driven operations and productivity (or 'reinvention', perhaps) - which we will assume is embraced enthusiastically by the whole workforce, who have all woken up as talented, conscientious and AI-proficient individuals.

How much more productive would that company be?

As a benchmark, recent history has the Fortune 500 growing revenue at ~5% annually, with strong performers hitting 10-15% (i.e. 1.10x-1.15x).

In this thought experiment, we don't have actual data on what would happen - but we can sketch an illustrative scenario to see where reasonable assumptions lead.

- It almost certainly wouldn't be a 100x increase, or anything close to that.

- It could conceivably be a 10x of some sort... but a 10x on what?

- Perhaps the most conceivable 10x is "the company would grow 10x more quickly than it has" rather than "the company's absolute revenue would increase 10x"

- On current benchmarks, this would optimistically be 100-150% revenue growth - equivalent only to a 2.0-2.5x overall growth on headline revenue

- A 10x increase on headline revenue would be a 100x increase on growth rate, which seems deeply improbable

As such, AI - which we've taken to have 100x potential on productivity - might, even under generous assumptions, result in a mere 2.0-2.5x productivity increase (or less!) at the enterprise-scale.

The precise numbers matter less than the direction: even in a wildly optimistic scenario, we're losing almost all of our AI multiplier. This thought experiment shows that we have an AI productivity haemorrhage - some kind of Post-Intelligence Bottleneck that is much more profound than 'adoption'. At the Fortune 500 enterprise scale, even at 100% adoption and proficiency, we are losing almost all of our AI multiplier.

Extrapolating this pattern out to a national or global economy, we can imagine that - even with otherworldly adoption assumptions - our productivity gains are negligible relative to 'civilization-scale impact'.

So, there's something huge that we're missing out on - and it's much more than an intellectual puzzle. This is a real loss of outcomes for the world.

History can teach us about solving this bottleneck

We might be tempted to accept this gap as a brute fact, and say that there is something inevitable or intrinsic about it - even if AI is a 100x technology, that would never scale to an organization, country or global economy.

I think we can be more optimistic than this.

I don't think that the thought experiment shows that there must be a gap - I think it shows that there is a Post-Intelligence Bottleneck that is not simply 'adoption'.

In particular, I claim that the Post-Intelligence Bottleneck is best explained as solvable infrastructure gap.

It turns out that this is entirely consistent with economic history - transformational technologies often require complementary infrastructure for society to yield the promised benefits.

In the next sections, we'll go deeper into this - first, studying the related economic history; and second, unpacking the Post-Intelligence Bottleneck as an infrastructure gap.

The J-curve of breakthrough technologies

The GPT concept that's far bigger than AI

The acronym 'GPT' exploded into the public consciousness with ChatGPT, itself powered by GPT-3.5 (successor to GPT-3, GPT-2, GPT-1). In that narrow context, GPT stands for Generative Pre-trained Transformer, where a transformer is a neural network architecture pioneered at Google.

We're interested in a much bigger concept of GPT: General Purpose Technologies. (This is what we'll use 'GPT' for in this essay.)

In short, GPTs are the big, era-defining technologies - such as steam power, electricity, personal computing.

There's not many technologies like this, but they exist - and become era-defining by being "powerful enough to accelerate the overall course of economic progress".

AI seems to uncontroversially be a GPT - both in a common sense intuitive way (it's a technology with 'general purpose' because you can throw AI at a huge range of problems) and also by the semi-formal classification criteria from the literature:

1. Pervasiveness – The GPT should spread to most sectors.

2. Improvement – The GPT should get better over time and, hence, should keep lowering the costs of its users.

3. Innovation spawning – The GPT should make it easier to invent and produce new products or processes

So, whilst AI in its modern LLM-based incarnation is a relatively recent phenomenon, it is in a lineage of great GPTs that drove enormous gains in productivity and living standards.

AI is probably the most powerful (and the most general) GPT that humanity has seen before - and it may be particularly special in being both a GPT and an 'invention of method of invention'. But there is a rich literature on the economic history of GPTs which we can refer to, to understand the Post-Intelligence Bottleneck - and how to overcome it.

The productivity J-curve

The main central theme in the economic history of GPTs is that they don't typically result in immediate economic transformation.

There can be a substantial gap of many decades before an invention is used across different economies:

- ~40 years for electricity

- ~60 years for railways

- ~120 years for steamboats

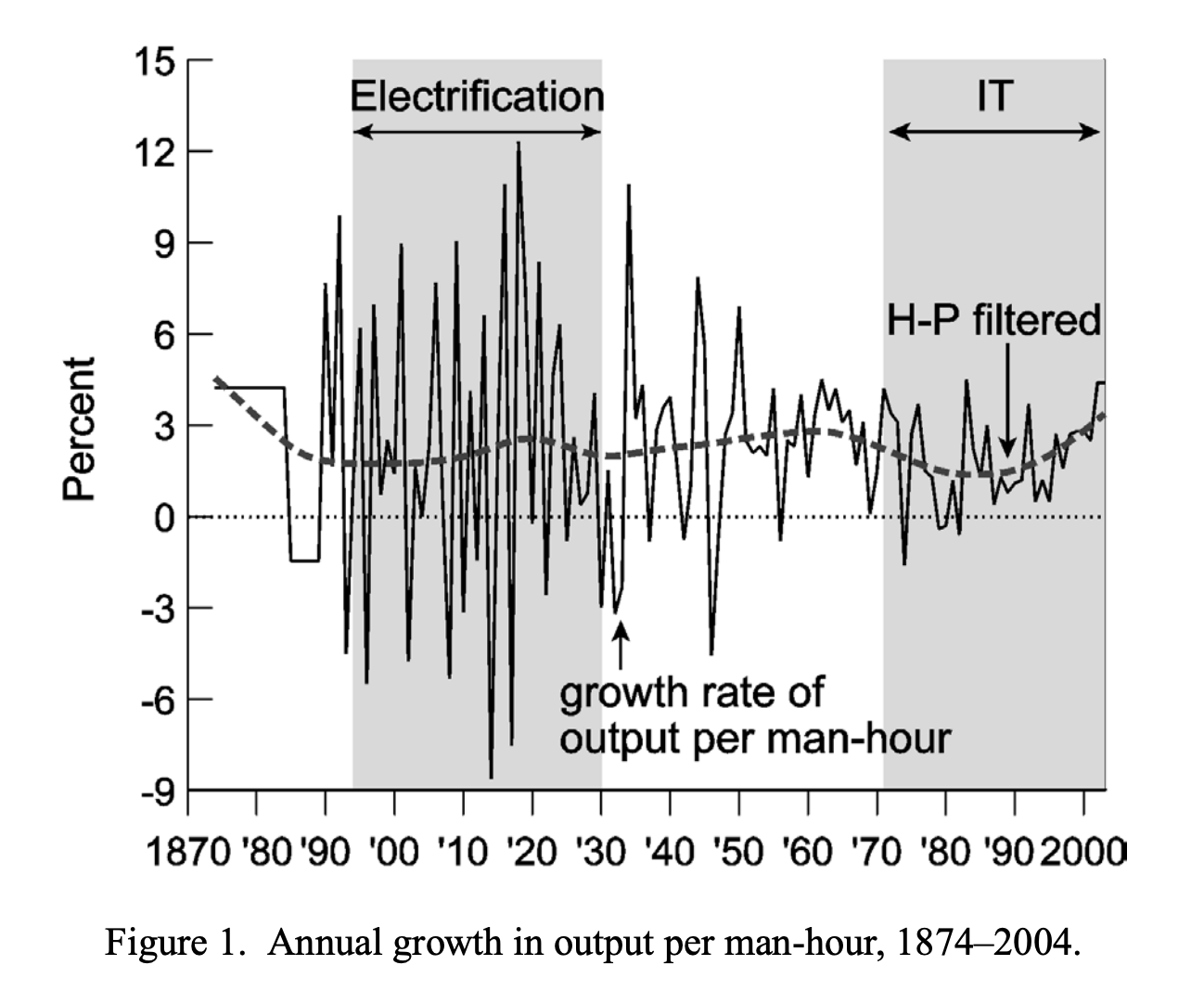

Not only is there a gap on the realization of GPT productive potential - there can even be a dip in productivity in the short-term. This is termed the 'Productivity J-Curve' - so-called because the arc of productivity (a dip down followed by dramatic increase) traces out a rough J.

Why is there a J-curve? It's because GPTs are so transformative, and their potential is so huge, that they can require large, long-term investments in infrastructure to fully exploit them - and even "a fundamental rethinking of the organization of production itself".

We're going to look at two different GPTs, electricity and IT, and show that both of these experienced a J-curve - with two common factors, new infrastructure and new ways of working, ultimately unlocking their productive potential.

The 19th-20th century: electricity

The 1900 Paris Exposition was a celebration of technological progress in the 19th century and an inspiring invitation to imagine a radically different 20th century.

Electricity may have been the most striking aspect of it. One observer - a certain Henry Adams (an intellectual and historian, descended from the 2nd US President, John Adams) - wrote:

It is a new century, and what we used to call electricity is its God.

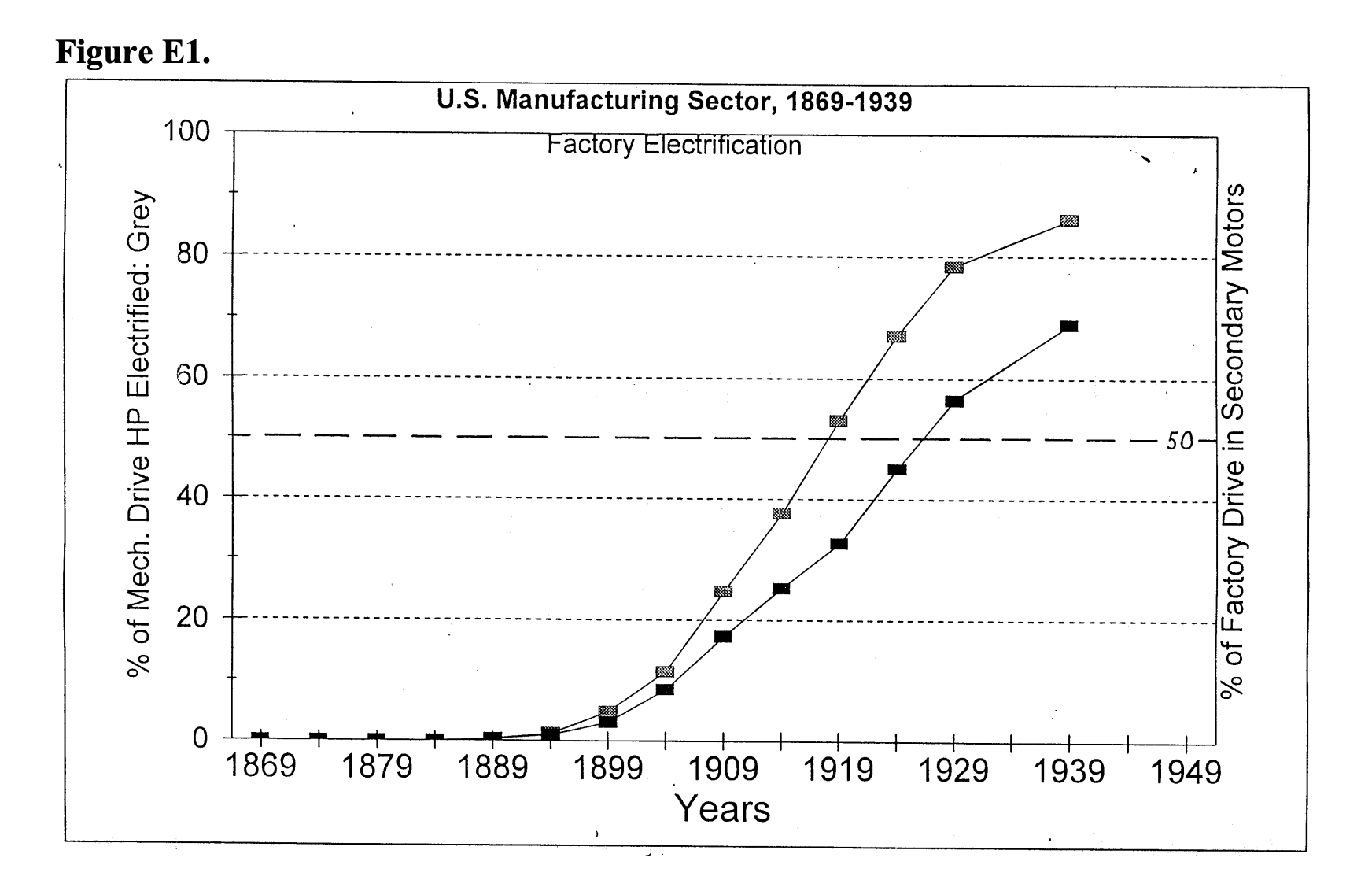

However, this was already 20 years after Thomas Edison had demonstrated his light bulb - and yet electrification still had decades to go before realizing its productive potential. At the time, fewer than 10% of US factories were electrified, and it took a further 20 years for that to reach 50% adoption.

We can understand this J-curve in terms of the costs both new infrastructure and new ways of working.

We can understand this J-curve in terms of the costs both new infrastructure and new ways of working.

- New infrastructure: factories at the time were running on water and steam - and they were both functional and profitable. Investing in electrification would have necessitated both stopping profitable production and making a huge capital outlay.

- New ways of working: the first-movers in electrification, high-growth industries (tobacco, fabricated metals, transportation) that were setting up new factories, found that it wasn't just 'swapping out steam drivers for electrical ones' - it went as wide as "new plant design and the necessary relocation of manufacturing to suitable greenfield sites outside the old urban core districts".

The late 20th century: IT

We can see the same pattern, more recently, with IT. The invention of IT - which researchers define as Intel's 1971 release of their 4004 microprocessor, the first commercially available single-chip microprocessor - was similarly associated with a decrease in trend productivity growth.

At the time, it was an open question: "Will the growth slowdown of the current IT era be followed by a rise in growth in the first half of the 21st century?". Whilst today, the six most valuable companies in the world are all technology companies (NVIDIA, Apple, Microsoft, Alphabet, Amazon, Meta) - it really wasn't apparent to the world at large that tech would be so dominant.

At the time, it was an open question: "Will the growth slowdown of the current IT era be followed by a rise in growth in the first half of the 21st century?". Whilst today, the six most valuable companies in the world are all technology companies (NVIDIA, Apple, Microsoft, Alphabet, Amazon, Meta) - it really wasn't apparent to the world at large that tech would be so dominant.

What explains this? It's the J-curve again. The invention of IT was itself not enough - what empirical research suggests is that it is overall organizational transformation that may have driven more of the productivity gains.

Like electricity, we can understand this as 'new infrastructure' and 'new ways of working':

- New infrastructure for organizational computing - e.g. corporate accounting systems

- New ways of working to make use of organizational computing - with impacts on profound things even like authority relationships and levels of centralization

Using GPT theory to explain the Post-Intelligence Bottleneck

So far, we've seen that:

- There is a Post-Intelligence Bottleneck that is stopping us from realizing AI's productive potential

- AI is a General Purpose Technology (GPT), and history shows that GPTs like electricity and IT have required new infrastructure and ways of working to become fully realized

Now, I'll bring these together - and suggest how the Post-Intelligence Bottleneck highlights our need for new infrastructure and ways of working.

Revisiting our scenario of mass adoption

We previously had imagined a fictitious Fortune 500 scale company, Generic Services Inc (GSI):

GSI is a global provider of knowledge work services (e.g. software development, legal services or management consulting) - exactly the type of work which today's frontier models already excel at.

In this fictitious, optimistic and somewhat magical scenario, GSI has a clear strategy set by the CEO to go 'all in' on AI-driven operations and productivity [...] which we will assume is embraced enthusiastically by the whole workforce, who have all woken up as talented, conscientious and AI-proficient individuals.

How much more productive would that company be?

We concluded that it might plausibly be no more than a 2.0-2.5x gain in overall company productivity, and possibly far less - a massive AI productivity haemorrhage.

It would still be a 10x increase on current typical rates of growth - but fall phenomenally short of how AI could, in principle, augment productivity by 100x (if not more).

Sceptics might posit that this is the structure of an organization is necessarily inefficient in some ways - through things like coordination costs, information loss and span-of-control limits.

But I want to suggest that:

- at least some of this Post-Intelligence Bottleneck is a gap in infrastructure and ways of working - similar to the economic history of electricity and IT

- with the right infrastructure, the typical organizational inefficiencies might be dramatically (but perhaps not entirely) mitigated

The intuitive case for new infrastructure and ways of working

One key fact we must remember is this: AI is not human.

This might seem trite, but it's the foundational pillar for the more precise point: we should not assume that organizational or productivity infrastructure built for humans is appropriate or optimal for AI agents.

For most of the past 30 years, knowledge work has operated within a central paradigm of artifacts created through GUIs and a human delegation tree:

- The organization runs a pyramid-like chain of command. At is simplest, this can be conceived as: CEO -> management -> ICs (individual contributors).

- ICs are the ultimate executors for a lot of delegated work, which typically takes place through GUIs (graphical user interfaces) for things like email, documents and spreadsheets.

- Management and the CEO use a mixture of meetings and GUIs for 'work about work', e.g. documenting what work should be done and communicating that down the delegation tree.

With AI, what's already emerging is humans sitting over a delegation tree for AI agents. This can be 1:1* (one human to one agent), 1:M* (one human to many agents), or even 1:M*:M* (one human to many 'orchestrator agents', each of whom manages many 'worker agents').

There are two dimensions along which we can see gaps opening up, in infrastructure and ways of working:

- Where does the work happen efficiently? If AI agents are the 'doers', are GUIs the best place for the creation of knowledge work artifacts?

- How is the work organized efficiently? If humans have delegation trees composed of multiple agent layers, are meetings and GUIs the best way to delegate and coordinate?

Three gaps in agent infrastructure

Across these two dimensions, there are three gaps in today's infrastructure behind the Post-Intelligence Bottleneck.

They correspond roughly to a 'how', 'what' and 'why' of agent activity:

- The interface gap: the 'how'

- The coordination gap: the 'what'

- The provenance gap: the 'why'

The interface gap: the 'how' of agent activity

Up until the 21st century, most humans navigated around using physical maps. We'd pull it out, locate our starting point, and try to manufacture a route from there to our desired destination, with a combination of eye-balling, finger-tracing, and received wisdom / heuristics.

Now, imagine asking your favourite AI model, "Can you help me navigate from my home to this office address, in a route that goes via this local school?"

It'd be cool and technically impressive if the AI was able to parse a physical map, process the route information within and digest it into some human-facing directions for you.

However, with the way that frontier AI works today, it'd also be incredibly inefficient.

There's a more machine-friendly way of navigating: GPS, a technology that is purpose-built for machine consumption.

The breakthrough in navigation came not from 'training machines to navigate the way that humans do' - it came from building an entirely new method and interface that achieved the same outcome in a far more efficient manner.

Lots of incumbents may try to retrofit agent-facing interfaces, like MCP, onto their existing products. But it seems plausible that the best agent-native interface for knowledge work will emerge from being agent-first.

For instance, GPS isn't 'a new interface for a map' - it's an entirely different way of representing spatial information, with comes with a more machine-friendly interface baked-in.

This is the interface infrastructure gap. For agents carrying out knowledge work on our behalf, we need to figure out:

- What do agents need to work at their best?

- What interfaces would support that?

The coordination gap: the 'what' of agent activity

Even at human-speed, effective team coordination is a real problem - one which has birthed an industry and several frameworks like agile, OKRs and RACI.

With agents, the problem is disproportionately bigger.

Imagine if a new 'self-driving rocket car' suddenly spawned into existence and started driving on our roads with the following characteristics:

- Rocket cars travel 100x faster than human cars

- Rocket cars are invisible to other rocket cars

- Rocket cars can spontaneously appear and disappear without human intervention

- The total number of rocket cars on the roads can oscillate wildly in a matter of seconds

- The license plate of a rocket car changes every few seconds

It would be enormously difficult to have an effective transportation system based on our current roads and traffic infrastructure.

This colorful thought experiment is a crude way to intuit some of the aspects of agent coordination:

- We have many more actors. Every human can have multiple agents working under them.

- The precise number of actors is unpredictable and incredibly dynamic. It's possible to scale up and down ephemeral agents very quickly.

- It's not easy for a human principal to accurately anticipate coordination problems between agents, when they might be context-switching between multiple agents.

- It's harder in general to anticipate coordination problems when actors (agents in this case) are moving so much faster.

- Agents can't really 'own' tasks or projects in the human sense of 'being accountable' for it. They don't have persistent, stable identity or existence. (There is no 'agent colleague, Diana' - just an illusion created from a stateless LLM response to input about its purported identity and previous output.)

This is the coordination infrastructure gap. For humans overseeing fleets of fast-moving, ephemeral agents, we need to figure out:

- How do we maintain coherent, real-time awareness of what agents are doing across an organisation?

- How do we prevent and resolve conflicts between agents acting on behalf of different humans?

- How do we preserve meaningful human accountability when the 'doers' are stateless and ephemeral?

The provenance gap: the 'why' of agent activity

Chesterton's Fence is a philosophy of change. It takes its name from a parable by the eponymous G. K. Chesterton, who wrote of an imaginary fence:

There exists in such a case a certain institution or law; let us say, for the sake of simplicity, a fence or gate erected across a road. The more modern type of reformer goes gaily up to it and says, "I don't see the use of this; let us clear it away." To which the more intelligent type of reformer will do well to answer: "If you don't see the use of it, I certainly won't let you clear it away. Go away and think. Then, when you can come back and tell me that you do see the use of it, I may allow you to destroy it.

This is a principle that many a software engineer has run afoul of - making changes to a codebase which seems like relatively uncontroversial 'cleaning up', because they don't see the utility of the code that they're removing, only to crash the production app, as it turns out there was some obscure quirk or edge case which the removed code was a patch for.

It's probably not right to dogmatically follow Chesterton's Fence - there may be times where the original intent or rationale of some code, ritual or design is irretrievably lost, and that unfortunate fact shouldn't be an indefeasible blocker on iteration.

However, Chesterton's Fence shows us the value of knowing "why are things the way they currently are?"

Imagine coming back to a financial model in a shared spreadsheet, and noticing that it looks completely different to how you last saw it - both in its structure and in what seem to be its input assumptions.

For this spreadsheet to be useful to you, you probably need to understand a few key things:

- Who changed it?

- Why did they change it?

- What's the basis of the new assumptions?

When we work only with humans, this is default-recoverable.

When we work with humans who each oversee a fleet of agents, this becomes far harder.

- The spreadsheet's history can't tell you whether its changes were made by a human or by an agent

- If it was an agent, the human whose agents made the edits might not even be aware that it was their agents if they're overseeing a fleet of agents

- It might even have been near-simultaneous (and possibly mutually inconsistent) edits by two agents with two different human principals, neither of which was aware of the other agent's intended changes

This is the provenance infrastructure gap. We need a systematic way of identifying:

- Which changes were made by humans or agents?

- For agent-authored changes, who authorized them?

- For agent-authored changes, what input (e.g. decisions, constraints, context) was given to the agent?

Towards agent-native infrastructure

We've argued above that the Post-Intelligence Bottleneck stems from gaps in agent-native infrastructure: provenance infrastructure, product/interface infrastructure and coordination infrastructure.

History has shown us that GPTs can require new infrastructure and new ways of working. Now, we'll show that these are also inseparable for AI. The new way of working emerges from collapsing the fragmented layers of today's knowledge work stack, and that collapse is itself the infrastructure.

In this section, we'll sketch an outline of what this looks like, and show some early experimental results.

Knowledge work's fragmented stack

Today's knowledge work infrastructure is built around artifacts like documents, spreadsheets and presentations - outputs of work.

Alongside these artifacts sits 'work about work': the alignment, communication and coordination layer. This creates an industry for project management and team communication software - e.g. Atlassian, Notion and Asana.

The problem now is that context is spread across multiple places. To manage this, we have yet another layer, which we might (tongue-in-cheek) call 'work about work about work'. At this level, software helps knowledge workers either search amongst all their fragmented context (e.g. Glean) or create patches to join them together (e.g. Zapier).

Collapsing boundaries with new infrastructure

We discussed earlier three types of infrastructure gaps: interface, coordination and provenance.

These correspond roughly to the three fragmentation layers which we briefly outlined above.

| Agent activity | Fragmented layer | |

|---|---|---|

| Interface | How | Work |

| Coordination | What | Work about work |

| Provenance | Why | Work about work about work |

By staying within these separate layers, we limit ourselves to incremental innovation. (It's still valuable - an AI co-pilot for Google Slides could genuinely save me time - but it doesn't tackle the fundamental infrastructure gaps or the Post-Intelligence Bottleneck.)

If we're serious about civilization-scale impact, we should be much more ambitious. The real opportunity here is to build the key infrastructure for agent activity and smash the artificial boundaries in the fragmented knowledge work stack.

Today's fragmented stack basically contains distinct 'types' of record (e.g. documents, goals, decisions) which are artificially walled off from each other.

- Documents, slides and spreadsheets live in the 'work' (office suite) walled garden.

- Goals, projects and tasks live in the 'work about work' walled garden.

- Decisions and constraints are implicit and nebulous. They might be found jotted down in a document ('work'), or they might be found in our project management ('work about work').

By bringing all the record types together and implementing structured relationships between them - in a way that is only possible when they're all part of the same system - we can solve the infrastructure gaps for agent activity and smash the artificial boundaries.

- Every record type becomes a node on a knowledge graph: one where decisions, tasks and documents are all first-class citizens, regardless of domain. You can link your customer CRM entry to a child note about them, and link that child note to a related product ticket.

- We get an agent-native interface through a common entry point to everything on our knowledge graph. Agents can easily gain context by tracing through connected nodes, and get the customer context from the related task. There's no latency from depending on external services.

- We get agent-native coordination through baking in new primitives that incumbents don't have. Documents and tasks can be

claimedby ephemeral agents, so other agents are aware - but with timestamps so that agents can identify stale claims. - We get agent-native provenance through the relationships between records. Tasks can be

implemented_byslides; projects can besuperseded_bydecisions; documents can bedrawn_fromspreadsheets.

These links are key. If agents can produce documents, code and analyses cheaply and fast, then artifacts are no longer the scarce output. What becomes scarce - and therefore valuable - is the judgment that shaped them: the decisions made, the direction given and the constraints provided.

The human's primary contribution in an agent-native world is directing, deciding, constraining and reviewing.

In a world of legacy GUIs, these get lost in transient agent conversations, unstructured markdown sprawl or opaque memory structures.

In our agent-native infrastructure, we can look at every goal, decision, task and document, and see the full history of agent activity, the authorizing scope of human principals, and the traceable path to an originating task or goal.

Early experiments in Native infrastructure

For the past 8 weeks, I've been building and testing in this space.

Native is our first experiment. It's an agent-first workspace for knowledge work.

AI agents can connect to Native via MCP and use it track decisions, manage your calendar, operate a CRM, organize your projects and draft your documents.

We've been dogfooding it - whilst the very early days of Native used a different project management tool, after a few days we switched entirely to building Native using Native.

We're excited to share some of the early results from it.

To make this concrete, here are two real conversations between me and Claude, where Claude is connected to my Native workspace via MCP. These are unedited transcripts — longer tool outputs are collapsed by default in Claude Code.

Sample conversation #1: self-organizing workspace

Here's a conversation I had with Opus 4.5, via Claude Code.

It has a minimal CLAUDE.md - basically just pointing it to use the bootstrap MCP tool from our server to get orientated.

❯ I got my Kaitlyn, an ML engineer at Kraken Technology, to test setup; I

watched her and took some notes here, record 35a6908

can you help me organize them somewhere better? feel free to err on side

over-exploring

⎿ auth_user_id: "a0081065-****-****-****-************"

workspaces:

… +221 lines (ctrl+o to expand)

⏺ th - get_record (MCP)(ids: ["35a6908"], include_full_content: true)

⎿ Path: TeamHive HQ [6746446] -> Setup observations

Record [35a6908]: Setup observations

Type: note

… +9 lines (ctrl+o to expand)

⏺ Let me explore the workspace structure to find the best home for these

observations.

⏺ th - get_structure (MCP)(record_id: "0974ae4", depth: 3, max_children: 20)

⎿ Richard & Kaitlyn [0974ae4] (workspace)

└── Japan Trip, March 2026 [37ba12c] (project)

├── Ideas [563941d] (collection) - 21 notes

└── Planning [050a8a3] (collection) - 6 tasks

⏺ th - query_record (MCP)(search: "onboarding setup", parent_id: "6746446",

scope: "descendants", format: "text", limit: 20)

⎿ Found 19 records:

Under TeamHive HQ → Workstreams → Product & Engineering → UI & Web Inte

… +81 lines (ctrl+o to expand)

⏺ th - query_record (MCP)(search: "llm.txt", parent_id: "6746446", scope:

"descendants", format: "text", limit: 10)

⎿ Found 10 records:

Under TeamHive HQ → Workstreams → Brand & Strategy [ec25a73]:

… +39 lines (ctrl+o to expand)

⏺ th - get_structure (MCP)(record_id: "7f61e81", depth: 3, max_children: 20)

⎿ This container has 22 direct children. Consider organizing related item

s under goals or collections.

… +2 lines (ctrl+o to expand)

⏺ th - get_record (MCP)(ids: ["8cf3869","2dd556a","768361b"],

include_full_content: true)

⎿ Found 3 of 3 records:

---

… +306 lines (ctrl+o to expand)

⏺ th - query_record (MCP)(search: "Kaitlyn", format: "text", limit: 10)

⎿ Found 10 records:

Under (root):

… +30 lines (ctrl+o to expand)

⏺ th - query_record (MCP)(parent_id: "7f61e81", format: "text", limit: 25)

⎿ Found 22 records under TeamHive HQ → Workstreams → Product & Engineerin

g → Onboarding & Landing [7f61e81]:

• [27df9e7] Prioritize stale facts resolution for demo readiness (dec

… +39 lines (ctrl+o to expand)

⏺ Good context gathered. The note has 4 observations from Kaitlyn's setup test —

a mix of bugs and a UX idea. Let me turn these into proper actionable records

under the right parents in Onboarding & Landing.

⏺ th - query_record (MCP)(search: "early adopter prospects", parent_id:

"6746446", type: "collection", format: "text", limit:

5)

⎿ Found 1 records under TeamHive HQ → Entities [171c522]:

• [c7c08b3] Early Adopter Prospects (collection) [crm]

⏺ th - get_record (MCP)(ids: ["3529b80"], include_full_content: true)

⎿ Path: TeamHive HQ [6746446] -> Workstreams [b01ed90] -> Product & Engin

eering [6252391] -> Onboarding & Landing [7f61e81] -> MCP server instal

lation flow for new users

… +40 lines (ctrl+o to expand)

⏺ th - get_structure (MCP)(record_id: "c7c08b3", depth: 2, max_children: 15)

⎿ ⚠️ Warning: This container has 108 direct children. Consider organizing

related items under goals or collections.

… +17 lines (ctrl+o to expand)

⏺ Now I have the full picture. Let me create the organized records — a Kaitlyn

entity under Early Adopter Prospects, a proper user testing session note, and

actionable bug/improvement tasks under Onboarding & Landing.

⏺ th - create_record (MCP)(actor: "agent:worker", records: [{"title":"Kraken

Technology","type":"organization","parent_id":"c7c08b3

","reason":"Kaitlyn's company - adding as early

adopter prospect from setup testing","summary":"ML

engineering company. Kaitlyn tested TeamHive setup as

early adopter."},{"title":"Kaitlyn setup test

observations","type":"note","parent_id":"7f61e81","tag

s":["user-testing","onboarding"],"reason":"Organizing

raw setup observations from Kaitlyn's test session

into structured note under Onboarding &

Landing","summary":"Observations from watching Kaitlyn

(ML engineer, Kraken Technology) test MCP setup

flow.","body":"#### Context\n\nRichard watched Kaitlyn

(ML engineer at Kraken Technology) go through the

TeamHive setup flow and noted issues.\n\nSource:

[[35a6908]]\n\n#### Findings\n\n###### Bugs\n1.

**`/llm.txt` URL incorrect** — needs to point to `www`

subdomain\n2. **Claude Code MCP config missing `type`

property** — needs `\"type\": \"http\"` in the config

template\n3. **Claude Code config path wrong** —

should be `~/.claude.json`, not whatever is currently

shown\n\n###### UX Improvements\n4. **Copy-paste friendly

setup** — add a \"copy and paste / point Claude to

this\" option so users can easily grab the

config"},{"title":"Fix /llm.txt URL to use www subdoma

in","type":"task","parent_id":"7f61e81","tags":["bug",

"onboarding","complexity:trivial"],"reason":"Bug found

during Kaitlyn's setup test - /llm.txt has wrong

URL","summary":"The URL in /llm.txt needs to be

corrected to point to the www

subdomain."},{"title":"Fix Claude Code MCP config: add

type property and correct

path","type":"task","parent_id":"7f61e81","tags":["bug

","onboarding"],"reason":"Two bugs found during

Kaitlyn's setup test with Claude Code config

template","summary":"Claude Code config template needs

type: \"http\" and correct path

(~/.claude.json).","body":"Two issues found during

user testing:\n\n1. The MCP config template for Claude

Code is missing the `\"type\": \"http\"` property\n2.

The config file path should be

`~/.claude.json`\n\nSource:

[[35a6908]]"},{"title":"Add copy-paste friendly MCP

config to setup flow","type":"task","parent_id":"7f61e

81","tags":["onboarding","ux"],"reason":"UX

improvement suggested by observing Kaitlyn's setup

experience","summary":"Add a 'copy and paste / point

Claude to this' option so users can easily grab the

MCP config.","body":"During user testing, it was

observed that having a ready-to-copy config block (or

a URL you can point Claude at) would smooth out the

setup experience.\n\nSource: [[35a6908]]"}])

⎿ Created 5 records:

5374ee5 organization: Kraken Technology

070f387 note: Kaitlyn setup test observations

… +4 lines (ctrl+o to expand)

⏺ Now let me add Kaitlyn as a person under Kraken Technology and link the

observation note to the original raw note.

⏺ th - create_record (MCP)(actor: "agent:worker", records: [{"title":"Kaitlyn","

type":"person","parent_id":"5374ee5","tags":["ml-engin

eer","adopter:testing"],"reason":"Adding Kaitlyn as

person entity - she tested the setup

flow","summary":"ML engineer at Kraken Technology.

Tested TeamHive MCP setup flow."}])

⎿ Created person cb3ac57: Kaitlyn

⏺ th - manage_links (MCP)(record_id: "070f387", operation: "add", actor:

"agent:worker", links: [{"target_id":"35a6908","relatio

nship":"relates_to","note":"Structured version of raw

observations"}])

⎿ Added 1 link(s)

⏺ Done. Here's what I organized from your raw note:

Structured observation note → 070f387 under Onboarding & Landing, linking back

to your original note 35a6908

3 actionable tasks (all under Onboarding & Landing):

- 0819713 — Fix /llm.txt URL to use www subdomain (tagged bug,

complexity:trivial)

- 3bf1f61 — Fix Claude Code MCP config: add type: "http" + correct path to

~/.claude.json (tagged bug)

- edf8109 — Add copy-paste friendly MCP config to setup flow (tagged ux)

Entity records for future CRM tracking:

- 5374ee5 — Kraken Technology (under Early Adopter Prospects)

- cb3ac57 — Kaitlyn (person, ML engineer)

✻ Brewed for 2m 22s

For me as a user, the main cool thing about this is that my workspace has become self-organizing.

With every note-taking or workspace product that I've used in the past before, there have been two pain points for me:

- It's hard to figure out the right organizing structure and hierarchy. There are tonnes of things to figure out: How flat vs deep should I go? Which concerns are conceptually related and should be in the same folder? What are the other big organizing concepts I should use?

- It's even harder to maintain the right organizing structure. I might at least enjoy setting up the structure, whilst I labor under the illusion of "This is going to be the system that finally keeps me organized!". But when it comes to using it, it's often painful to both: (a) remember where things should go; and (b) actually manually relocate them there. (It then gets even worse if you're sharing the workspace with other people...)

Now, with Claude + Native, my workspace organizes itself.

- I took a rough note and stuck it in the workspace root (which is definitely not the right long-term home for it)

- Claude correctly organized it in the CRM part of my workspace: creating proper records for both the person (Kaitlyn) and the mentioned company (Kraken Tech), and even pulling out the actionable tasks and locating them under the appropriate area, with sensible tags

Sample conversation #2: fuzzy recollection

Here's a second sample conversation from February 5th that I had with Opus 4.5.

Two small points of pre-emptive context to avoid confusion:

- At the time of this conversation, I was experimenting with HiveNative as a name; originally, it had been TeamHive. Subsequently, I shortened 'HiveNative' to 'Native'.

/hivenative:scope (MCP)is Claude Code syntax for using an MCP server prompt.scopeis an MCP server prompt that I designed to turning 'vague fuzzy notion' into something tangible. It's pretty similar to a local slash command.

❯ /hivenative:scope (MCP) I think we recently worked on suggesting to agents

(MCP clients) that they can link things back to users in a clickable way, e.g.

`https://teamhive.com/task/[taskId]`.

I think it might be worth us thinking about "what might be a nice shorter URL

for me to get"? e.g. `hive.io` (taken)

⏺ I see you want to think about acquiring a shorter, memorable URL for the

product - since the current approach suggests URLs like

https://teamhive.com/task/[taskId] but a shorter domain would be nicer for

users.

Let me first get oriented and understand the current state.

⏺ hivenative - bootstrap (MCP)

⎿ auth_user_id: "a0081065-****-****-****-************"

workspaces:

… +57 lines (ctrl+o to expand)

⏺ Now let me check if there's any existing work or decisions around

URLs/domains.

⏺ hivenative - query_record (MCP)(search: "URL domain short link", parent_id:

"6746446", scope: "descendants", format:

"text")

⎿ [73 of 73 matches | filters: search: "URL domain short link"; parent: 6

746446 (descendants)]

… +374 lines (ctrl+o to expand)

⏺ I found relevant records. Let me get more details on the short redirect domain

goal that already exists.

⏺ hivenative - get_record (MCP)(ids: ["224f8eb","f3a08c0","5c9cd27"],

include_full_content: true)

⎿ Found 3 of 3 records:

---

… +191 lines (ctrl+o to expand)

⏺ Excellent! There's already substantial work here. Now let me also check the

recent URL affordance work that was mentioned.

⏺ Searched for 1 pattern, read 1 file (ctrl+o to expand)

⏺ Now I have good context. Let me also check on the recent rebrand - I see

mentions of both "TeamHive" and "HiveNative".

⏺ hivenative - query_record (MCP)(search: "rename rebrand HiveNative domain",

parent_id: "6746446", scope: "descendants",

limit: 10, format: "text")

⎿ [9 of 9 matches | filters: search: "rename rebrand HiveNative domain";

parent: 6746446 (descendants)]

… +45 lines (ctrl+o to expand)

⏺ Good context. I see the rebrand to HiveNative is recent (Feb 4), and the

existing short redirect domain research was done on Feb 2 when it was still

"TeamHive". Let me get the rebrand decision to understand the naming

situation.

⏺ hivenative - get_record (MCP)(ids: ["a9bb4f4"], include_full_content: true)

⎿ Path: HiveNative HQ [6746446] -> Workstreams [b01ed90] -> Brand & Strat

egy [ec25a73] -> Branding & Naming [a068ed0] -> Rebrand to HiveNative

Record [a9bb4f4]: Rebrand to HiveNative

… +90 lines (ctrl+o to expand)

⏺ Now I have the full picture. Let me summarize what I see:

---

What I See

There's already existing work on this:

1. Goal [224f8eb]: "Short redirect domain for TeamHive short IDs" — contains

detailed research from Feb 2

2. Goal [f3a08c0]: "Integrate short redirect URLs into TeamHive UI" — depends

on the domain being acquired

3. Recent rebrand to HiveNative (Feb 4) — the domain research predates this

and uses "TeamHive" naming

The existing research identified candidates like thv.sh, thive.to, thive.io,

etc. — all based on "TeamHive". With the rebrand to HiveNative, this research

needs updating.

Concerns Identified

Concern A: Domain Selection

- What short domain to acquire for HiveNative

- The "thive" candidates no longer make sense post-rebrand

- Need fresh research with "hive" or "hivenative" as the root

- Independence: must be decided before infrastructure work

Concern B: Redirect Infrastructure (already decided)

- Cloudflare Workers for redirects

- 302 temporary redirects

- Already scoped in existing goal

Concern C: UI Integration (already scoped)

- Exists as [f3a08c0], depends on domain acquisition

- Blocked until domain is live

Assessment

This is really one focused piece of work: updating the domain selection

decision post-rebrand. The infrastructure and UI concerns are already scoped

separately.

The existing goal [224f8eb] captured the pre-rebrand research well. We need to

either:

- Update that goal with new HiveNative-aligned candidates, or

- Make a domain selection decision that supersedes the "Decisions Deferred"

section

---

Does this breakdown match your mental model? Should we focus on researching

HiveNative-aligned short domain options?

✻ Cooked for 1m 23s

As a user, here's a couple of things that I thought was cool about this (and therefore worth saving).

-

I feel liberated from having to remember, search or organize things.

- It's enough for me to give a vague fuzzy pointer to Claude - "I think we recently worked on X" - and it can figure out what I mean from our workspace.

- Previously, I might have had to dig around in some file tree or search modal, and intentionally organize things in a way which will make it easier for future me. Now, Claude can do it all.

-

It validates that Claude is able to use the workspace in a smart way.

- Claude goes 'wide then deep' - its first search query returns 73 records, and it picks a relevant 3 specific ones to look at in full.

- It shows good judgement in figuring out when it should keep pulling on a thread:

- Now I have good context. Let me also check on the recent rebrand - I see mentions of both "TeamHive" and "HiveNative".

- Good context. I see the rebrand to HiveNative is recent (Feb 4), and the existing short redirect domain research was done on Feb 2 when it was still "TeamHive". Let me get the rebrand decision to understand the naming situation.

- (In a certain way, this isn't that surprising - intuitively, it's a very similar skill to searching through codebases - but it's still nice to tangibly validate a key, foundational premise.)

A public MCP for exploration

I think the best way to understand the benefits of a Native workspace is to experience one.

So, I've made a significant subset of my 'Native HQ' workspace public. This is the Native workspace which I use to build Native.

By far, the most interesting and fun way to explore this is through an agent + MCP.

I've found that Claude models seem to be much better at using the Native MCP than other models - I hypothesize that perhaps Claude has had more post-training on MCP, given MCP's origins inside Anthropic - and so I strongly recommend Claude for this. (Any platform is fine.)

To get set up on Claude web (or desktop / mobile), you can either:

- Make sure you're logged in

- Follow this link

Or, alternatively, copy and paste the below prompt:

I'd like to connect to Native's public workspace via MCP so I can explore what they're building.

The public MCP server is https://www.withnative.ai/api/mcp/public —can you walk me through adding it as a custom connector? The steps should be Settings → Connectors → Add custom connector. No authentication needed — it's a read-only public endpoint.

Once set up, please remind me to:

1. Start a new chat with the connector enabled (click "+" → Connectors → toggle it on)

2. Try some of these questions to get started:

- What is Native building, and what problem are they solving?

- What key product decisions have Native made recently, and why?

- What do you, as an agent, think about Native?

(I'm assuming that you're using Claude web, desktop or mobile there. If you're using Claude Code, I assume you're savvy enough to get that set up!)

In addition to MCP:

- You add the short ID for any record after

n8v.to/to view it on the web UI, e.g. n8v.to/6746446 is the public read-only version of our root workspace record - In the web UI for a record, you can browse relationships and history and navigate the workspace graph

- On desktop, you can use

Cmd/Ctrl+Kto open up search, orCmd/Ctrl+.to open up the context sidebar

A meta case study: building the public MCP

[Author note: whilst most of this essay was human-written, this section specifically is AI-generated. I simply prompted Opus 4.6 to reconstruct the story of how we built the public MCP from our workspace records for the purpose of this essay - I didn't have to do any of the searching or narrative myself.]

To illustrate what provenance looks like in practice, consider the story of how this public API came to exist — a story that is itself traceable through the workspace graph.

It started with a simple question: can an AI agent on Claude.ai explore our workspace? An agent researched this and discovered a constraint — Claude.ai can't send custom authentication headers, only connect to authless endpoints. This ruled out our existing token-based approach. A decision was recorded: create a separate, authless endpoint for public exploration.

That decision led to another. When we went to document both access methods — the original bearer token and the new authless endpoint — we realized the authless approach made the token redundant. Why maintain two methods when one is simpler and works everywhere? A second decision: simplify to a single URL, retire the token.

Then came a design question: what should a public-facing agent API actually expose? We chose an allowlist — only tools explicitly marked as public-safe get registered. Anything new is blocked by default unless deliberately opened. A curated public readme was built to give visiting agents orientation context: what this workspace is, what conventions govern it, what decisions have been made.

Finally, an access model crystallized across three tiers: anyone can explore through the authless endpoint, higher-intent users (developers connecting from code editors) sign up for personal API keys, and full read-write access requires an invitation.

Five linked decisions across three weeks, each building on the last — from "can Claude.ai connect?" to a considered access architecture. Each decision records the alternatives that were weighed and the rationale for the path taken. Any agent or human arriving later can trace why the API works the way it does, what was considered, and what was deliberately left out.

This is what provenance looks like as infrastructure. Not a changelog or a git blame, but a navigable web of design decisions — each linked to the goals it serves and the work that implements it.

Bending the J-Curve of AI

This essay started with the related ideas that: (1) civilization-scale impact of AI needs us to go beyond raw model intelligence; (2) there is a Post-Intelligence Bottleneck on productivity gains being realized in the economy.

The economic history of 'general purpose technologies', like electricity and IT, shows us that this is not an unusual story. Breakthrough technologies require new infrastructure and new ways of working before their productive potential is realized.

AI is arguably the most powerful general purpose technology humanity has ever produced, but there are infrastructure gaps (in interface, provenance and coordination) that need to be filled for us to unlock its full potential.

History shows the J-curve is not destiny — it's a measure of how long it takes to build the right infrastructure. The faster we build it, the sooner the curve bends upward.

We're early — the shape of agent-native infrastructure will evolve as the technology does. But we believe the direction is clear: the gap between AI capability and AI impact won't close through better models alone. It closes when we build the substrate.

This is an infrastructure problem. And infrastructure problems, once recognized, are solvable.

If you're serious about agent-native infrastructure, we'd love to talk. Reach out to richard@withnative.ai.